Robotics perception

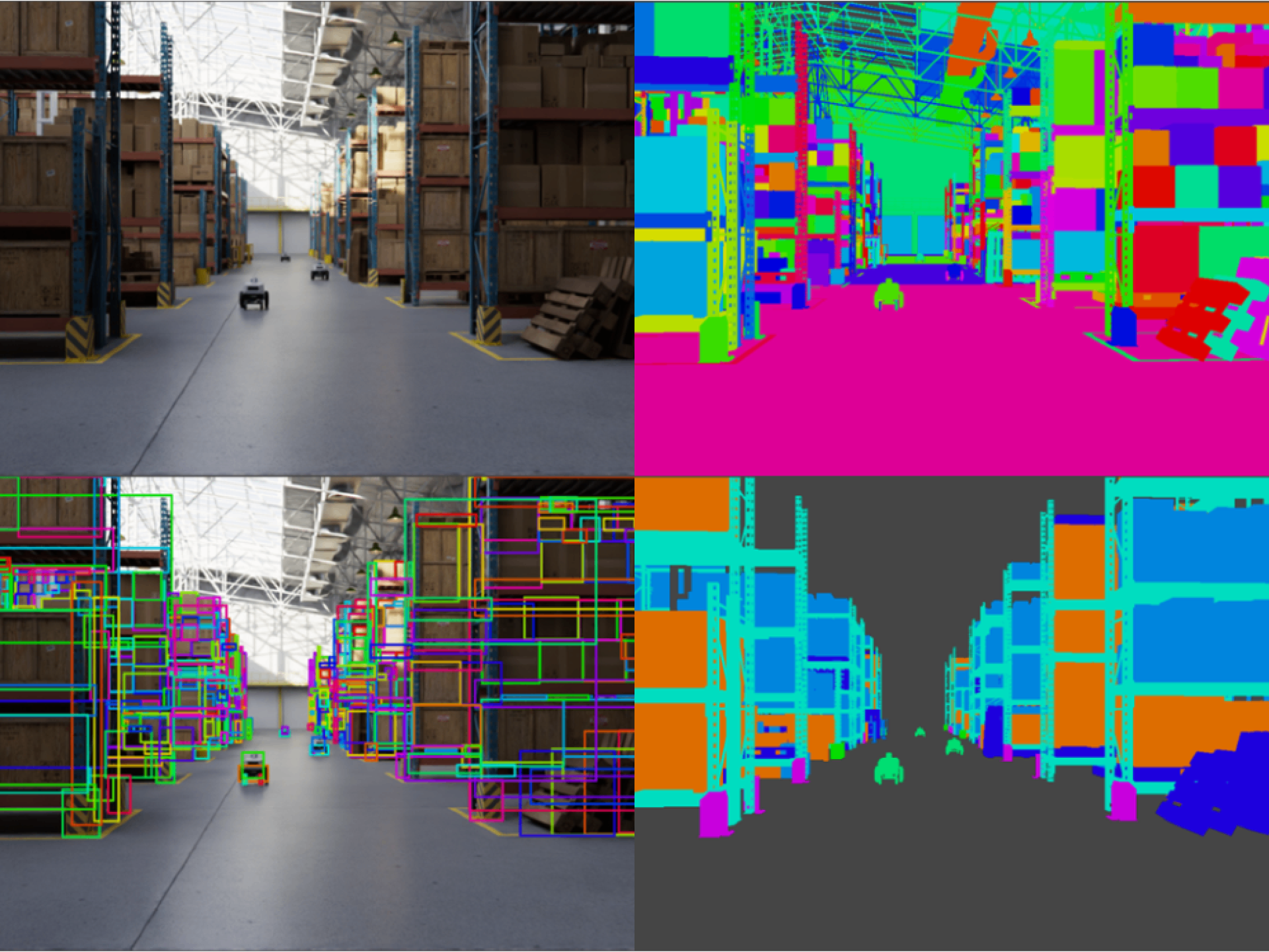

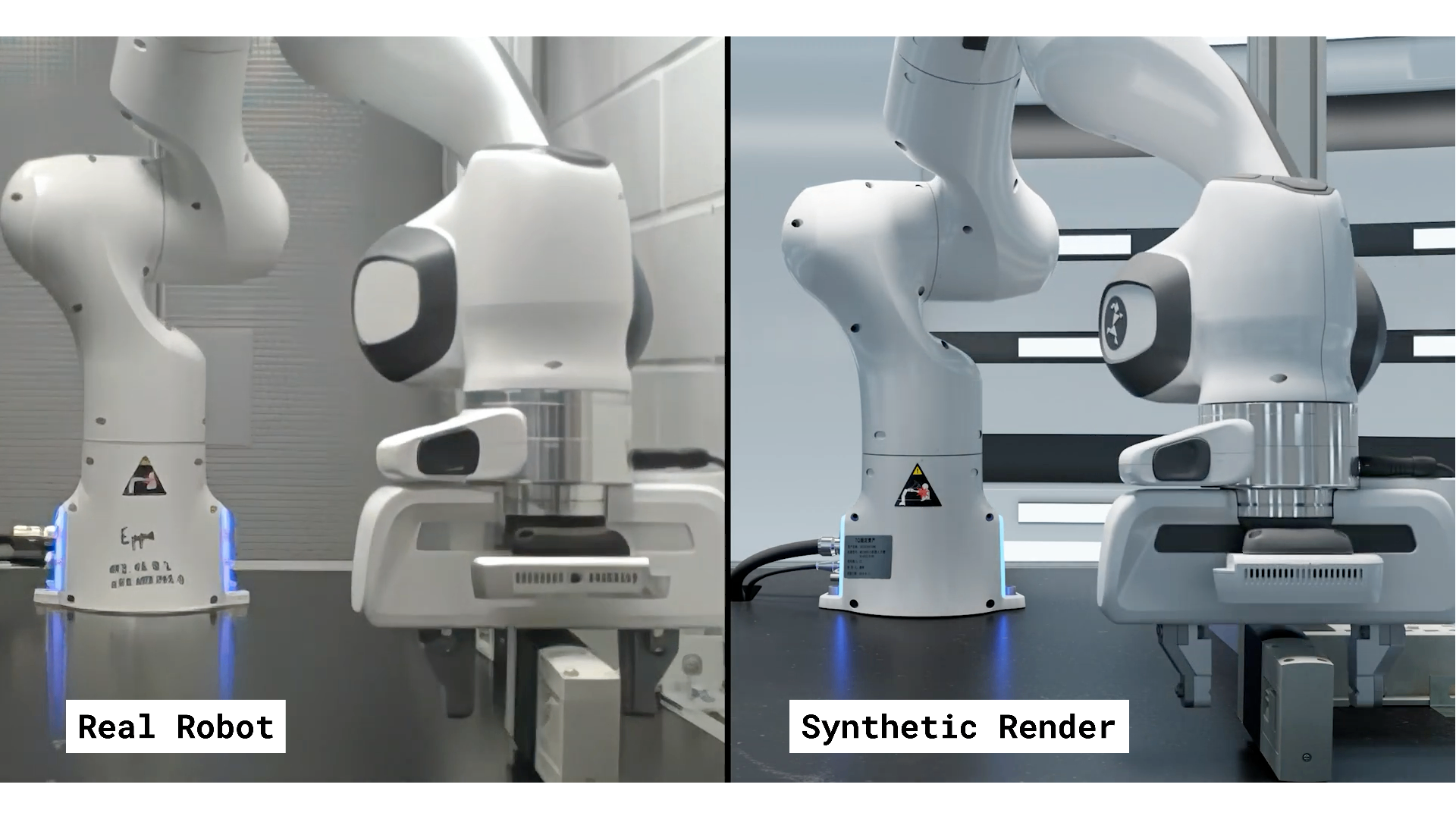

Synthetic datasets and simulation for the perception stack. Train and test models across controlled and rare scenarios before real-world deployment.

Robotics perception in one paragraph.

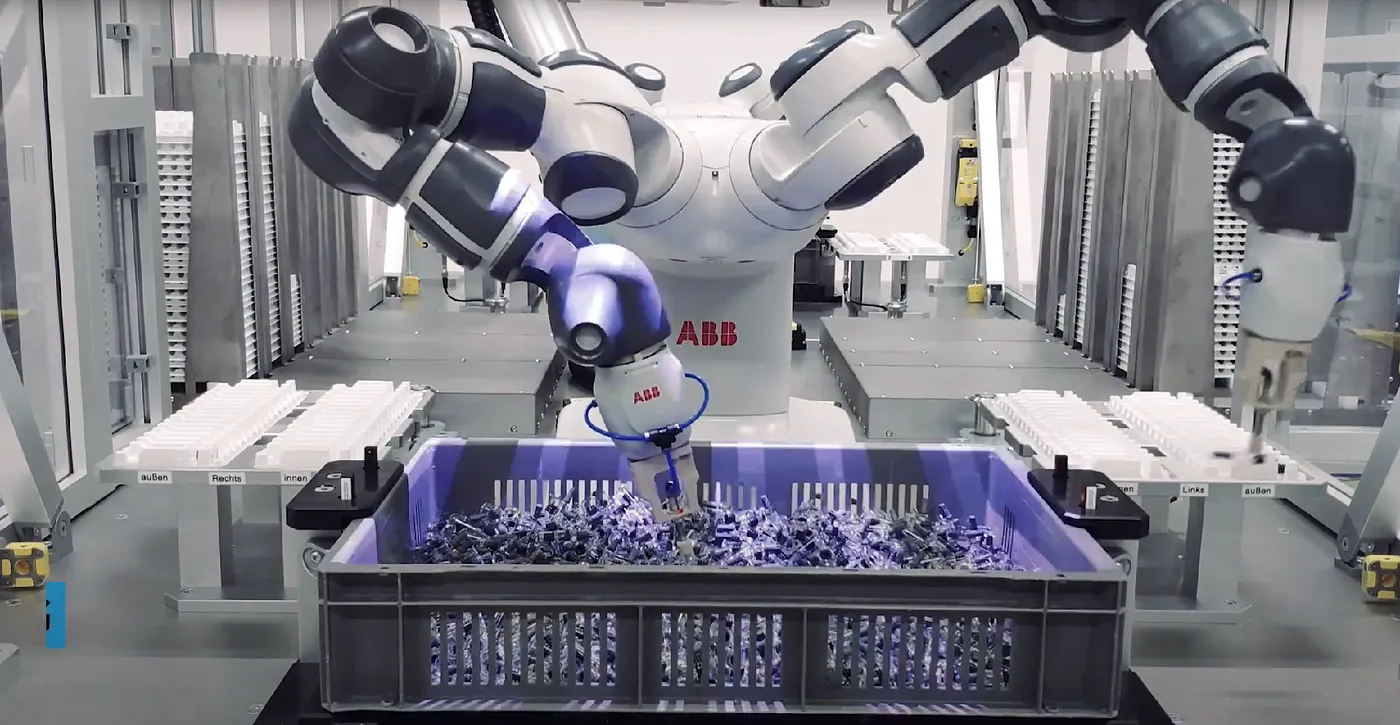

Perception is how a robot turns sensor input into a model of the world. Detection, segmentation, depth, pose, and tracking. It runs on RGB cameras, depth sensors, stereo rigs, and lidar.

The control stack is mature. Perception is what limits where the robot can be deployed.

Perception is where autonomy is decided.

Perception drives behavior

A robot acts on what it sees. Bad perception turns into bad motion, missed grasps, and unsafe paths.

3D understanding is required

Pose, depth, and geometry matter as much as classification. The model needs the full scene, not a label.

Safety in shared spaces

Robots that work near people must recognize people, obstacles, and unexpected objects reliably.

Environments vary

Warehouses, workshops, and outdoor sites all look different and change between shifts.

Why building reliable perception is hard.

The model is rarely the bottleneck. Data and field time are.

Edge cases are everywhere

Reflective surfaces, occlusions, awkward poses, and stacked objects. Real capture misses most of them.

3D labels are expensive

Segmentation masks, 6D poses, and depth ground truth are slow and costly to label by hand.

Hardware changes break models

A new camera, a new mount, or a new lens shifts the input distribution and breaks accuracy.

Field testing is slow

Every iteration on a real robot costs setup, supervision, and repair time. Cycles run in weeks.

Synthetic data and photorealistic simulation.

A virtual cell mirrors the real one. The robot, the sensor, the lighting, and the objects are parameters. Each render comes with exact ground truth. Bounding boxes, segmentation masks, depth, and 6D poses.

Generative models complement simulation. They take a few real samples and produce controlled variations in texture and appearance. Simulation covers physics. Generative covers texture.

Three ways synthetic data changes the work.

Cover the long tail

Render the rare object poses, lighting, and clutter that your robot will eventually meet on the floor.

Train and test in simulation

Mirror the robot, the sensor, and the scene. Iterate on the perception stack without booking a real cell.

Adapt to new hardware fast

Swap the virtual sensor, regenerate the dataset, retrain. Keep the same pipeline across cameras and mounts.

What teams get out of it.

More reliable perception

Coverage of cases that real capture cannot reach.

Faster integration cycles

New robot or new sensor without restarting from zero.

Lower cost per iteration

Most experiments run in software, not on the cell.

Talk to us about your dataset.

Tell us the inspection task and the conditions. We will come back with what is feasible, the timeline, and the cost.